Kaptain Tutorials

Kaptain offers several ways to train models (including distributed), tune hyperparameters, and deploy optimized models that autoscale.

The Kaptain SDK is the best choice for a data science-friendly user experience. It is designed to be a great first experience with Kaptain.

If you prefer to have full control and are familiar and comfortable with Kubeflow SDKs, or YAML specifications in Kubernetes, then we suggest you consult the other tutorials.

Note that everything can be done from within notebooks, thanks to Kaptain’s notebooks-first approach to machine learning.

How to Navigate the User Interface

Central Dashboard

The central dashboard of the Kubeflow UI is the main entry point to Kaptain after logging in:

The central area shows recent pipelines, pipeline runs, notebooks and links to documentation.

The namespace is shown at the top.

Sidebar

The menu on the left has the following entries:

Home

Notebooks

Tensorboards

Volumes

Experiments (AutoML)

Experiments (KFP)

Pipelines

Runs/Recurring Runs

Artifacts

Executions

MLflow

NGC catalog

These are discussed in more detail below.

Volumes

The Volumes page shows a list of created persistence volumes in the user’s namespace and allows to manage (create and delete) them via Volume Manager UI.

Tensorboards

On the Tensorboards page, users can create and configure TensorBoard instances. TensorBoard is a tool for providing the measurements and visualizations needed during the machine learning workflow. It enables tracking experiment metrics like loss and accuracy, visualizing the model graph, displaying images, text, audio data, and much more.

To set up a new TensorBoard instance, select New TensorBoard at the top right in the Tensorboards page.

Specify a name for the new instance, choose the storage type, and specify the location of log files to be parsed by TensorBoard.

WARNING: Object Store configuration via the UI is currently not supported. Is it possible to use AWS S3 or MinIO object storage with TensorBoard by setting AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY and S3_ENDPOINT environment variables in TensorBoard K8s Deployment resource.

Refer to the MNIST with TensorFlow tutorial for more details about integrating a Tensorflow application with Tensorboard when running code from a notebook cell or in distributed mode via TFJob.

When the instance is ready, select Connect to open the TensorBoard UI:

Pipeline

Pipelines are available from the Pipelines menu. Details on how to create pipelines are in the pipelines tutorial.

Experiments and Runs

A list of experiments and pipeline runs is available in the Experiments (KFP) menu. It shows a list of runs along with their status, duration, and model performance metrics like accuracy and loss.

Pipeline Run Logs

Select a single run in the Runs page to display logs for individual steps. This is particularly helpful when debugging pipeline steps. Each step logs its inputs and outputs, which can be accessed via the Input/Output tab.

Pipeline Artifacts

Input and outputs of steps, also known as artifacts, are stored in the Artifacts Store. These are available in the Artifacts menu. The lineage of pipeline artifacts is displayed in the Lineage Explorer tab.

Notebooks

You can set up Notebook servers from the Notebooks menu. From there, you can choose a quick-start image for any of the supported deep learning frameworks: TensorFlow, PyTorch, and MXNet. Each quick-start image comes in two flavors: CPU and GPU. The latter has all the drivers needed for training on GPUs included. Custom images can also be provided.

How to Set Up a Notebook

A notebook server is the entry point to cluster resources for machine learning. Each notebook is a Docker container with Jupyter and various frameworks included out of the box.

To set up a new notebook:

Select the Notebooks page from the sidebar. If no notebook servers have been set up previously, the list is empty.

Select New Notebook at the top right.

A new page opens to configure the notebook.

The notebook requires a Name and a Namespace associated with it. Multi-tenancy in Kaptain is by namespace, so resources and access to these are restricted by namespace. You can set up as many notebooks within a namespace as required. Users with access to the namespace will have access to all notebook servers within the same namespace.

Select the Docker Image for the notebook from the drop-down menu, or enter it manually. The images included in Kaptain are quick-start images designed to help you get started in Kaptain with ease. Each quick-start image includes Spark, standard Python libraries, and a single deep learning framework: TensorFlow, PyTorch, or MXNet. Each image comes in two flavors: CPU and GPU. These are identical, except for GPU drivers and GPU-enabled framework libraries.

If you run CPU-only machine learning workloads from a notebook, choose the CPU images, as these are typically a few GB smaller and therefore load more quickly. For notebooks that can run on CPU, GPU, or a combination thereof, select one of the GPU images, indicated with the suffix “-gpu”.

Custom images can be added by an administrator to be made available to the entire organization. Such custom images can include company-internal libraries or frameworks compiled against specific hardware.

Each notebook server receives a number of resources attached to it:

CPUs (mandatory)

RAM (mandatory)

GPUs (optional)

These resources are per pod. You can also configure resource limits in the Advanced Options section. The GPU vendor is always NVIDIA, but it must be selected.

Code run from within a Jupyter notebook on Kaptain runs on these resources. This means that it is possible to restrict the number of resources attached to a particular notebook server, even though the cluster may have more resources available for additional workloads or other notebook servers.

The next configuration option for a notebook server allows Workspace Volumes to be added. This enables notebook workspaces to be persisted. Data volumes can also be mounted to notebook servers. These are mounted to each pod on the notebook server.

Affinity/Tolerations are advanced configuration options that allow the notebook’s workloads to run on specific resources within the cluster using toleration groups. This allows for separation of, say, development and production workloads on the same underlying Kubernetes cluster without having to set up multiple clusters. It also enables specific workloads to be run on dedicated hardware, for example large-scale image processing on state-of-the-art GPUs that are off limits for other workloads or notebook servers.

Custom configurations, such as for instance secrets, can be added to the notebook server with the Configurations option. The toggle for Enabled Shared Memory under Miscellaneous Settings is recommended as certain machine learning frameworks (for example PyTorch) require it.

Once you have completed the required configuration options for the notebook server, the Launch button becomes active and can be selected. The setup may take a few minutes depending on the size of the notebook image chosen. The progress can be monitored on the Notebooks page.

Please consult Kubeflow’s documentation on setting up notebook servers for additional details.

Notebook Servers can be stopped and resumed (in other words, scaled-down or up) to free up allocated resources such as GPU or memory and make them available to other workloads. To stop a notebook server, press the ■ button on the right side of the notebook list. Resume the notebook by pressing the ► button. It is also possible to configure Kaptain to automatically scale down idle notebooks. More information is located in the Auto Cleanup Settings.

Jupyter Notebooks

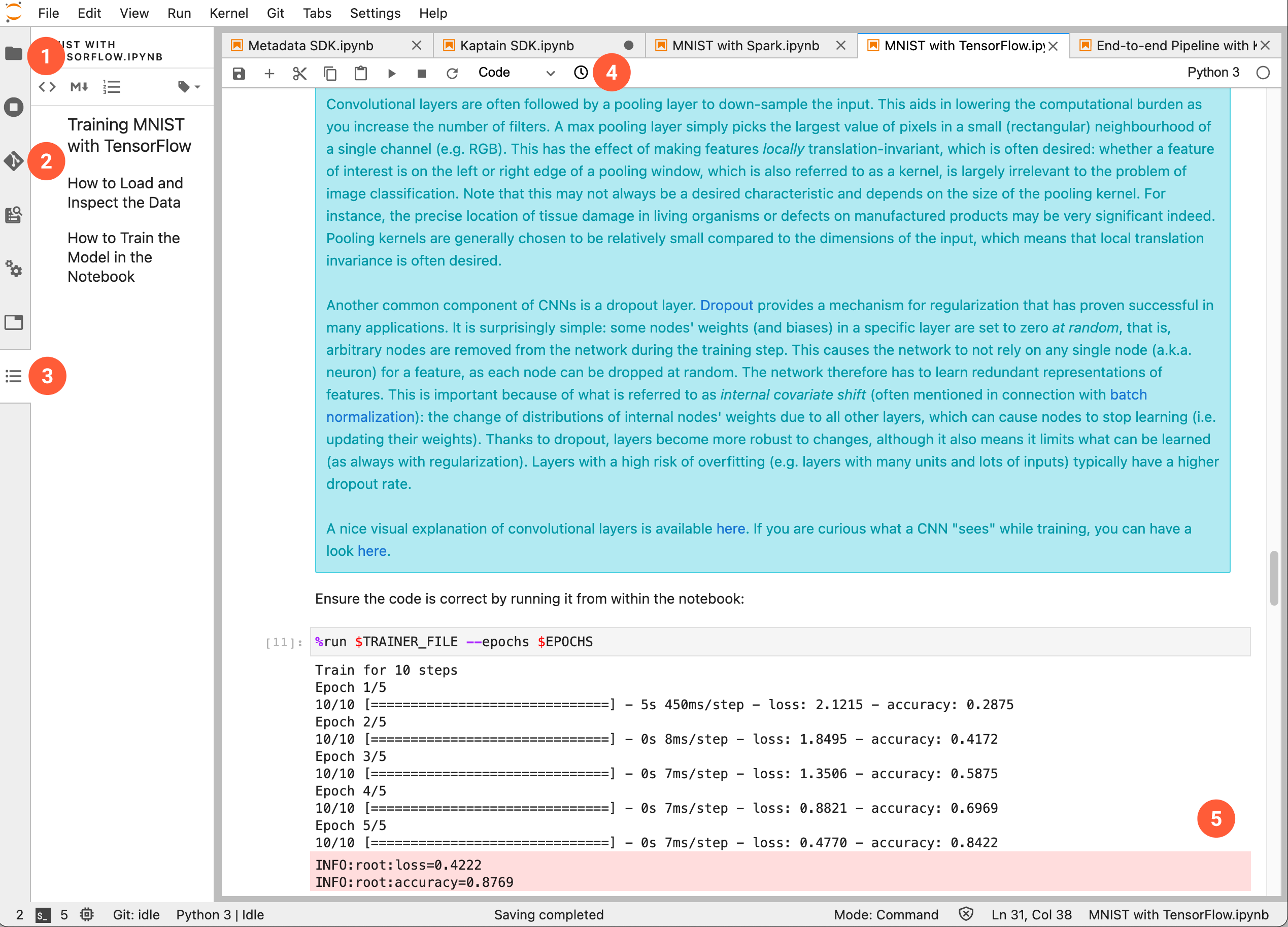

Once a notebook server has been set up, a familiar Jupyter notebook environment is available:

The numbered sections are as follows:

Directory tree on the notebook server

Visual git module

Table of contents for the currently visible notebook

Notebook diff viewer

Notebook cells with embedded output

Additional details on the JupyterLab environment can be found in the JupyterLab documentation.

Katib

Katib is the hyperparameter tuner and neural architecture search module in Kaptain. To learn how to create hyperparameter tuning experiments, read the tutorial.

These experiments can be accessed through the Experiments (AutoML) menu.

After selecting one experiment, a chart of the main objective and different hyperparameter values is shown.

The experiment details contain 4 tabs:

The Overview tab shows the hyperparameters of the best trial in the experiment.

The Trials contain hyperparameters for each trial.

Details includes objective, parameters, algorithm, metric collector of the experiment.

The YAML section has a YAML representation of the launched experiment.